|

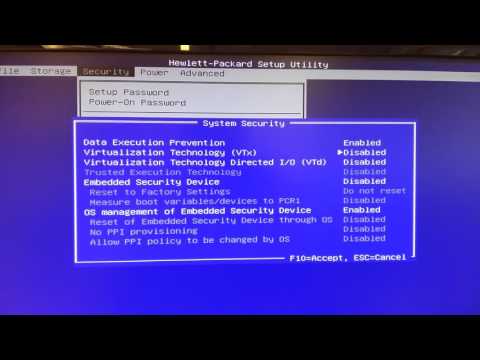

You must enable hardware virtualization for the particular VM for which you want. Thus, you need a processor that supports VT-x or AMD-V to use 64-bit guests.

by Martin Brinkmann on March 20, 2010 in Windows - Last Update: August 11, 2016 - 4 comments

Update: Microsoft has integrated the hardware virtualization patch into Service Pack 1 for Windows 7. It is no longer necessary to download the fix manually. If you have not installed the service pack already, download it from Microsoft to do so.

Not many users have problems running software on Windows 7, as the operating system seems to be compatible with most available programs. Microsoft has nevertheless added the so called Windows XP Mode to the operating system. This mode provides users with access to a virtual Windows XP system that integrates smoothly into the Windows 7 operating system.

The reason for the integration is to provide users of the operating system with options to run software in Windows XP legacy mode. This can be useful for software that works under XP but does not run under Windows 7 for whatever reason.

Windows XP Mode required a processor with hardware virtualization support when it was introduced. This meant that it could not be used on some systems if support for the feature was missing.

Users can find out if their PC is supporting hardware virtualization by downloading Microsoft's Hardware Assisted Virtualization Tool.

Microsoft announced that it removed the hardware virtualization requirement of Windows XP Mode two days ago, and that it would make a patch for the operating system.

The effect is that Windows XP mode can be run on systems that do not support cpu hardware virtualization. The only requirement remaining is to have the right edition of Windows 7 that supports XP mode (the professional editions support it meaning Pro, Ultimate and Enterprise).

The patches have now been made available for 32-bit and 64-bit editions of Windows 7. Once installed they remove the requirement of hardware virtualization which will get rid of error messages like 'Unable to start Windows Virtual PC because hardware-assisted virtualization is disabled' and 'Cannot start Windows Virtual PC Host Process. Check the System event log for more details' which users experienced previously.

A genuine validation check is performed before the download becomes available.

Update for Windows 7 (KB977206)

Update for Windows 7 for x64-based Systems (KB977206)

Install this update to remove the prerequisites required to run Windows Virtual PC and XP mode

Have you been making use of the Windows XP Mode in Windows 7? If so how was your experience?

Windows 7 Patch Removes Hardware Virtualization Requirement Of Windows XP Mode

Description

Microsoft announced recently that it made the decision to remove the hardware virtualization requirement from Windows 7's Windows XP Mode.

Author

Ghacks Technology News

Logo

Advertisement

The Packaged CCE deployment at the customer site must run in a duplexed environment with a pair of Unified Computing System (UCS) servers. These servers are referred to as Side A Host and Side B Host.

The two Packaged CCE servers must use the same server model.

Packaged CCE supports UCS (BE7000H or BE7000M) servers for the remote sites. It supports Spec-based servers if they align with the specifications mentioned in Packaged Collaboration Virtualization Hardware.

When ordering Packaged CCE with the UCS B200 M4, customers must either already have a supported UCS B-Series platform infrastructure and SAN in their data center or must purchase these separately. UCS B-Series blades are not standalone servers and have no internal storage.

The Packaged CCE UCS B-Series Fabric Interconnects Validation Tool performs checks on currently deployed UCS B-Series Fabric Interconnect clusters to determine compliance with Packaged CCE requirements. This tool does not test all UCS B-Series requirements, only those related to Packaged CCE compliance. For more information, refer to Packaged CCE UCS B-Series Fabric Interconnects Validation Tool.

For installing Cisco Unified Communications Manager, use cucm_11.5_vmv8_v1.1.ova.

Packaged CCE deployments on a UCS B-Series platform require a supported Storage Area Network (SAN). Packaged CCE blade servers do not come with internal storage and must use Boot from SAN.

Packaged CCE supports the following SAN transports in Fibre Channel (FC) End Host or Switch Mode:

Packaged CCE requirements for SAN:

The following SAN/NAS storage technologies are not supported:

SAN LUNs with SATA/SAS 7200 RPM and slower disk drives are only supported where used in Tiered Storage Pools containing SSD (Solid State) and 10000 and/or 15000 RPM SAS/FC HDDs.

While customers may use thin provisioned LUNs, Packaged CCE VMs must be deployed Thick-provisioned, thus SAN LUNs must have sufficient storage space to accommodate all applications VMs on deployment.

Side B Server Component Configurations for C240 M3, C240 M4, C240 M5 and Specification-Based Servers

The illustration below shows the reference design for all Packaged CCE deployments on UCS C-Series servers and the network implementation of the vSphere vSwitch design.

This design calls for using the VMware NIC Teaming (without load balancing) of virtual machine network interface controller (vmnic) interfaces in an Active/Standby configuration through alternate and redundant hardware paths to the network.

The network side implementation does not have to exactly match this illustration, but it must allow for redundancy and must not allow for single points of failure affecting both Visible and Private network communications.

Note: The customer also has the option, at their discretion, to configure VMware NIC Teaming on the Management vSwitch on the same or separate switch infrastructure in the data center.

Requirements:

This figure illustrates a configuration for the vSwitches and vmnic adapters on a UCS C-Series server using the redundant Active/Standby vSwitch NIC Teaming design. The configuration is the same for the Side A server and the Side B server.

The illustration below shows the reference design for Packaged CCE deployments on UCS C-Series servers with the VMware vNetwork Distributed Switch.

You must use Port Group override, similar to the configuration for the UCS-B series servers. See the VMware vSwitch Design for Cisco UCS B-Series Servers section below.

Reference and required design for UCS C-Series server Packaged CCE Visible and Private networks Ethernet uplinks uses the VMware default of IEEE 802.1Q (dot1q) trunking, which is referred to as the Virtual Switch VLAN Tagging (VST) mode. This design requires that specific settings be used on the uplink data center switch, as described in the example below.

Improper configuration of up-link ports can directly and negatively impact system performance, operation, and fault handling.

Note: All VLAN settings are given for example purposes. Customer VLANs may vary according to their specific network requirements.

Note:

The figure below shows the virtual to physical Packaged CCE communications path from application local OS NICs to the data center network switching infrastructure.

The reference design depicted uses a single virtual switch with two vmnics in Active/Active mode, with Visible and Private network path diversity aligned through the Fabric Interconnects using the Port Group vmnic override mechanism of the VMware vSwitch.

Alternate designs are allowed, such as those resembling that of UCS C-Series servers where each Port Group (VLAN) has its own vSwitch with two vmnics in Active/Standby configuration. In all designs, path diversity of the Visible and Private networks must be maintained so that both networks do not fail in the event of a single path loss through the Fabric Interconnects.

The figures in this topic illustrate the two vmnic interfaces with Port Group override for the VMware vSwitch on a UCS B-Series server using an Active/Active vmnic teaming design. The configuration is the same for the Side A and Side B servers.

The following figure shows the Public network alignment (preferred path via override) to the vmnic0 interface.

The following figure shows the Private networks alignment to the vmnic1 interface.

When using Active/Active vmnic interfaces, Active/Standby can be set per Port Group (VLAN) in the vSwitch dialog in the vSphere Web Client, as shown:

Ensure that the Packaged CCE Visible and Private networks Active and Standby vmnics are alternated through Fabric Interconnects so that no single path failure will result in a failover of both network communication paths at one time. In order to check this, you may need to compare the MAC addresses of the vmnics in vSphere to the MAC addresses assigned to the blade in UCS Manager to determine the Fabric Interconnect to which each vmnic is aligned.

UCS B-Series servers may also be designed to have 6 or more vmnic interfaces with separate vSwitch Active/Standby pairs similar to the design used for UCS C-Series servers. This design still requires that active path for Visible and Private networks be alternated between the two Fabric Interconnects.

Use the UCS B-series example configuration as a guideline for configuring the UCS B-series with a VMware vNetwork Distributed Switch.

The figure below shows the Packaged CCE reference design for Nexus 1000V with UCS B-Series servers.

Except for the reference diagram, the requirements and configuration for the Nexus 1000V are the same for Packaged CCE and Unified CCE. For details on using the Nexus 1000V, see Nexus 1000v Support in Unified CCE.

This topic provides examples of data center switch uplink port configurations for connecting to UCS B-series Fabric Interconnects.

There are several supported designs for configuring Ethernet uplinks from UCS B-Series Fabric Interconnects to the data center switches for Packaged CCE. Virtual Switch VLAN Tagging is required, with EtherChannel / Link Aggregation Control Protocol (LACP) and Virtual PortChannel (vPC) being options depending on data center switch capabilities.

The required and reference design for Packaged CCE Visible and Private network uplinks from UCS Fabric Interconnects uses a Common-L2 design, where both Packaged CCE VLANs are trunked to a pair of data center switches. Customer also may choose to trunk other management (including VMware) and enterprise networks on these same links, or use a Disjoint-L2 model to separate these networks from Packaged CCE.Both designs are supported, though only the Common-L2 model is used here.

Note: All VLAN, vPC and PortChannel IDs and configuration settings are given for example purposes. Customer VLANs, IDs and any vPC timing and priority settings may vary according to their specific network requirements.

Improper configuration of up-link ports can directly and negatively impact system performance, operation, and fault handling.

In this example, UCS Fabric Interconnect Ethernet uplinks to a pair of Cisco Nexus 5500 series switches using LACP and vPC. UCS Fabric Interconnects require LACP where PortChannel uplinks are used, regardless of whether they are vPC.

Note: Cisco Catalyst 10G switches with VSS also may be used in a similar uplink topology with VSS (MEC) uplinks to the Fabric Interconnects. That IOS configuration is not described here, and differs from the configuration of NX-OS.

Note: Additional interfaces can be added to the vPCs (channel-groups) to increase the aggregate uplink bandwidth. These interfaces must be added symmetrically on both Nexus 5500 switches.

In this example, a pair of Cisco Nexus 5500 series switches uplinked to the UCS Fabric Interconnects without PortChannels or vPC (the Nexus 5500 pair may still be vPC enabled).

Note: Cisco Catalyst switches capable of 10G Ethernet also may use a similar uplink topology. That IOS configuration is not described here, and may differ from NX-OS configuration.

In this example, a Nexus 5500 pair with non-vPC PortChannel (EtherChannel with LACP) uplinks to the UCS Fabric Interconnects.

Note: Cisco Catalyst switches capable of 10G Ethernet also may use a similar uplink topology. That IOS configuration is not described here, and may differ from NX-OS configuration.

This section details the Packaged CCE application IO requirements to be used for Storage Area Networks (SAN) provisioning. You must use these data points to properly size and provision LUNs to be mapped to datastores in vSphere to then host the Packaged CCE applications. Partners and Customers should work closely with their SAN vendor to size LUNs to these requirements.

Packaged CCE on UCS B-Series does not require a fixed or set number of LUNs/Datatores. Instead, customers may use as few as a single, or use a 1 to 1 mapping of application VM to LUN, provided that the Packaged CCE applications IOPS throughput and latency requirements are met. Any given LUN design will vary from vendor to vendor, and SAN model to model. Work closely with your SAN vendor to determine the best solution to meet the given requirements here.

The IOPS provided in this topic are for Packaged CCE on-box components only. For any off-box applications, refer to each application's documentation for IOPS requirements.

Requirements and restrictions for SAN LUN Provisioning include the following:

Note: In the following IOPS and KBps tables:

The list below designates which VMware features can be supported by Packaged CCE while in production under load due to the known or unpredictable behavior they may have on the applications. Many of the VMware features that cannot be supported in production can be used within a customer's planned maintenance downtime, where any interruption will not impact business operations. Some unsupported features will by their function cause violation of the Packaged CCE validation rules.

Packaged CCE supports specification-based hardware. This section provides the supported server hardware, component version, and storage configurations.

Note: Specification Based Hardware is supported only if you have installed Packaged CCE 11.6(1) ES9 patch or 11.6(2) MR.

Minimum matching1 hardware specifications for the Packaged CCE core (A & B side) components servers are as follows:

Notes:

Packaged CCE enforces the following limits for Specs-based servers. Packaged CCE will not allow you to deploy additional Virtual Machines to a Specs-based server once any one of these limits is reached. For this reason, you should size your servers accordingly for your deployment needs.

Notes:

The following Virtual Machines are Packaged CCE core components, which cannot be split across multiple-servers or removed from inclusion in the solution. The minimum server specifications calculated at the end of the Packaged CCE A Side Core Applications table are for the Packaged CCE core applications only, but you can add extra optional VMs to the server using the same formulas to find the new minimum hardware requirements. Please see Optional Application VM specifications and formula rules below.

Packaged CCE A Side Core Applications

Minimum Server Specifications:

Minimum Cores 12 MHz/Core 3577 Minimum MHz 42924 Minimum RAM (GB) 87 Minimum Disk Space (GB) 2270

Packaged CCE B Side Core Applications

Minimum Server Specifications: B Side server must match A Side server.

Packaged CCE Optional Applications

Note: Third-party application VMs must use resource reservations (MHz) for coresidency support with oversubscription. Otherwise, they are assumed to consume a complete core per vCPU of the third-party VM.

You can include optional applications on the Packaged CCE Core servers or placed on separate servers. In either case, use the following formulas to determine specific hardware requirements for your specific solution design.

Hardware calculated formulas as enforced by PCCE rules validation

CPU Oversubscription formula:

(# socketed processors x # cores per processor) x 2 = vCPU capacity Example: (2 processors x 10 cores per) x 2 = 40 vCPU capacity for resident VMs

CPU Reservation formula:

(# socketed processors x # cores per processor) x (processor standard MHz) x 0.65 = MHz capacity Example: (2 processors x 10 cores per) x (2600) x 0.65 = 33800 MHz capacity for resident VMs

Memory Reservation formula:

(server RAM in GB) x 0.8 = vRAM capacity Example: (128 GB) x 0.8 = 102 GB capacity for resident VMs

Storage Usage formula:

(formatted datastore in GB) x 0.8 = storage capacity Example: (1870 GB) x 0.8 = 1496 GB capacity for resident VMs Note: Multiple datastores/arrays/LUNs are supported, where each attached must meet this formulas requirement.

Requirements calculated formulas (to find minimum server specifications based on VMs selected)

CPU Oversubscription formula:

(SUM vCPU)/2 = minimum physical processor cores required Example: Design calls for 30 vCPU total for all VMs to be run on a server. 30/2 = 15, or 16 cores (as 2x8 or 1x16 core processor options). Important Note: Core MHz plays and important role as well. You might find that additional cores or faster core speed can also affect decision on the required number of processors and cores per processor. For example, if in the above example, (16 x 2500MHz x 0.65) is not sufficient for the MHz reservation required, you might either need higher core speed or more cores to make up the difference.

CPU Reservation formula:

ROUNDUP((SUM MHz)/65)*100 = minimum server MHz required Example: (30000/65)*100 = 46154(rounded-up). Use this in conjunction with the CPU Oversubscription formula to find an available processor specification that meets your requirements. For example, 16 cores at 2.6 GHz is only 41600 MHz, and does not meet the requirement. But, 20 cores x 2.5 GHz or 16 cores x 3.0 GHz would be 50000 and 48000 MHz respectively, and would meet the requirement.

Memory Reservation formula:

(SUM vRAM)*1.25 = minimum physical RAM required Example: (68)*1.25 = 85 GB RAM required minimum. Usually rounded up to nearest multiple of 32 or 64 (e.g. 96 or 128 GB – vendor/server specs may vary RAM:CPU bank rules).

Storage Usage formula:

(SUM storage to be assigned to a datastore)*1.25 = minimum required formatted space on that datastore Note: Multiple datastores, arrays, or LUNs are supported, where each attached unit must meet this formulas requirement.

For more information on specification-based hardware, see Collaboration Virtualization Hardware.

This section lists the specifications for the C240 M3 server. The customer deployment must run in a duplexed environment using a pair of core Unified Computing System (UCS) C240 M3 servers known as Side A and Side B. Remote Expert Mobile must be installed on its own pair of Side A and Side B servers. It must not be installed co-resident on Packaged CCE Side A and Side B servers.

Cisco Remote Expert Mobile supports specification-based hardware, but limits this support to only UCS B-Series blade and C-Series server hardware. This section provides the supported server hardware, component version, and storage configurations. For more information about specification-based hardware, see UC Virtualization Supported Hardware at UC_Virtualization_Supported_Hardware.

Note: Specification-based and over-subscription policy: For specification-based hardware, total CPU reservations must be within 65 percent of the available CPU of the host. Total memory reservations must be within 80 percent of the available memory of the host. Total traffic must be within 50 percent of the maximum of the network interface card. IOPS for storage must meet the VM IOPS requirement.

For more information regarding Virtual machine installation and configuration, refer to “Remote Expert Mobile—Installation and Configuration Guide 10.6”.

If using a UCS Tested Reference Configuration or specifications-based system, the minimum requirements for development and production systems are:

Refer to the VMware developer documentation for additional configuration and hardware requirements. Use the Cisco Unified Computing System (CUCS) to simplify and maximize performance. See Unified_Communications_in_a_Virtualized_Environment for the current list of supported UCS Tested Reference Configurations and specs-based supported platforms.

Ensure that:

Remote Expert Mobile can co-reside with other applications (VMs occupying same host) subject to the following conditions:

Note: Remote Expert Mobile must be installed on its own pair of Side A and Side B servers. It must not be installed co-resident on Packaged CCE Side A and Side B servers.

A REAS node can be deployed in a small OVA or large OVA.

A REMB node can be deployed in a Large OVA. Transcoding between VP8 and H.264 as well as Opus and G.711/G.729 performance varies depending on video resolution, frame rate, bitrate as well as server type, virtualization or bare metal OS installs, processors as well codec types. However, general guidelines for REMB nodes are as follows.

The following guidelines apply when clustering Cisco Remote Expert Mobile for mobile and web access:

Note: Remote Expert Mobile capacity planning must also consider the capacity of the associated Unified CM cluster(s) and CUBE nodes.

Remote Expert Mobile 11.6(1)

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2022

Categories |

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

RSS Feed

RSS Feed